Vercel Agent Skills: Solving AI's Outdated Training Problem

AI coding agents excel at writing code but struggle with frameworks that evolve faster than model training cycles. Vercel's agent skills introduce a new primitive that packages version-matched documentation, best practices, and complete workflows into an installable format—distinct from MCP servers, tools, or system prompts.

AI coding agents have a memory problem. They're trained on snapshots of framework documentation—often months or years old—then deployed into environments where libraries ship breaking changes every quarter. Ask an agent to scaffold a Next.js app, and it might suggest patterns deprecated two versions ago, because that's what existed in its training corpus. The code works, technically, but it's already legacy.

Vercel's agent skills address this gap by packaging version-matched documentation, task-specific workflows, and guardrails into installable primitives that agents can use. This isn't just fresher docs—it's a category of tooling designed to keep AI agents effective as frameworks evolve faster than training cycles allow.

The Training Data Time Lag

Large language models freeze their knowledge at training time. If a model's cutoff date is March 2024, it has no awareness of features shipped in April or best practices that emerged over the summer. For frameworks like React or Next.js—where the community's understanding of performance patterns or server component usage shifts rapidly—this creates a disconnect. An agent might confidently recommend getServerSideProps when the current guidance favors server components, simply because that's what the training data emphasized.

Skills solve this by treating knowledge as installable software. Instead of relying on static training data, agents can pull in current guidance for specific tasks like React performance optimization or web deployment, complete with version numbers and context about when to apply each technique.

What Makes Skills Different from Existing Primitives

The AI tooling space already has MCP servers (which expose atomic capabilities like file access), tools (function calls that agents can invoke), and system prompts (general instructions about behavior). Skills differentiate themselves by packaging complete workflows—not just "how to run a deployment script," but "when to deploy, what to check first, which configurations to avoid, and how to handle errors."

An MCP server might give an agent the ability to read a database. A tool might let it execute a query. A skill packages the workflow—understanding which queries are safe for an agent to run autonomously, which require human review, and what context from the codebase informs those decisions. It's instructions plus scripts plus guardrails, bundled into a format that agents can install and apply to domain-specific problems.

How Skills Work: Packaging Context for Agents

Skills are structured packages that combine documentation with executable components. For a web deployment skill, that means not just "here's how to push to production," but version-specific deployment patterns for Next.js 15, error-handling workflows that account for edge runtime constraints, and contextual flags that tell the agent when to escalate decisions to a human developer.

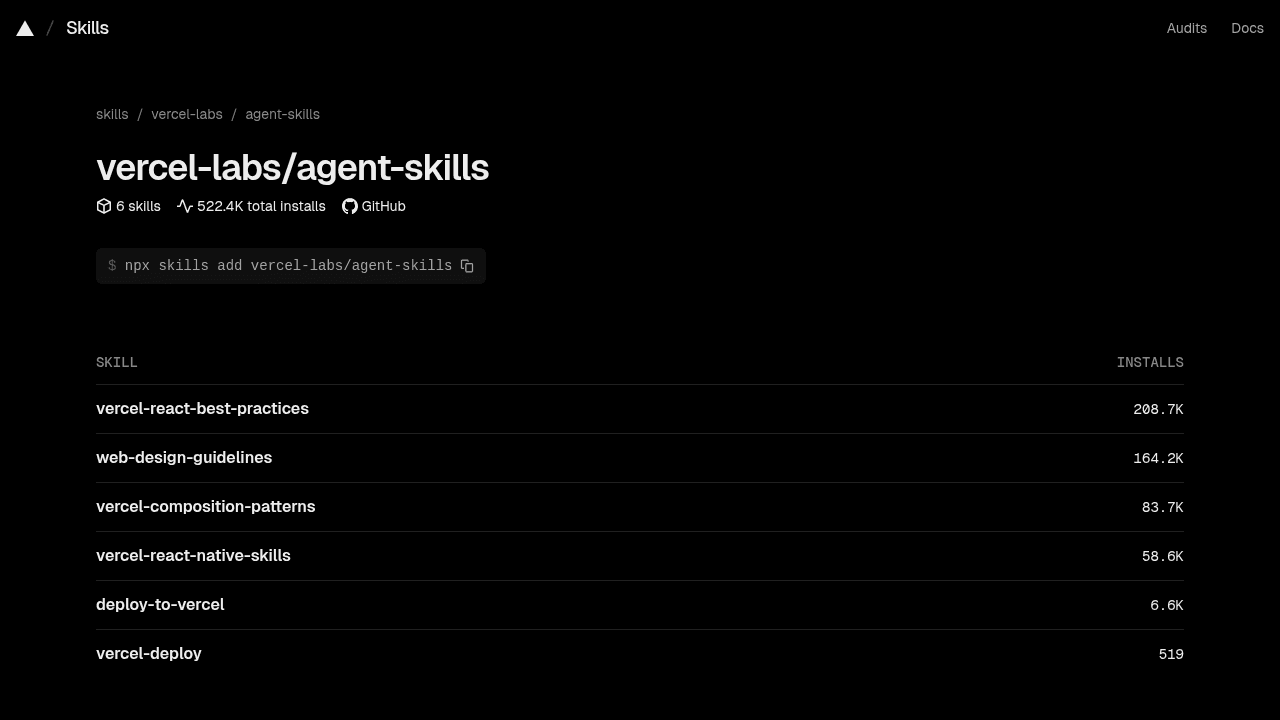

Vercel launched the skills CLI in January 2025, enabling installation across 16 different agents—a recognition that this infrastructure needs to work across the space, not just within Vercel's own tooling. The rapid adoption (tens of thousands of installs in the first weeks) suggests developers were already patching this gap manually, writing custom system prompts or maintaining agent-specific documentation repos.

Telemetry Concerns and User Feedback

Some users have raised concerns about telemetry in the skills tooling, seeking alternatives or clarity on data collection practices. Transparency about what's being tracked and why matters in open tooling. This is the kind of friction that gets resolved through community dialogue.

Why This Needed to Exist

Agent skills fill a gap that wasn't addressable with existing primitives. Framework evolution outpaces training cycles—that's a given in modern web development. But agents don't just need access to current information (which retrieval-augmented generation can provide). They need packaged expertise: the judgment calls, the "don't do this even though it's technically possible," the workflows that experienced developers internalize but rarely write down.

For AI tool builders, this matters as a template. Any domain where knowledge evolves rapidly—API design patterns, security best practices, infrastructure-as-code conventions—faces the same challenge. Skills demonstrate one approach: treat expertise as software, version it alongside the frameworks it describes, and make it installable. It's infrastructure for keeping AI effective when the ground keeps shifting.

vercel-labs/agent-skills

Vercel's official collection of agent skills