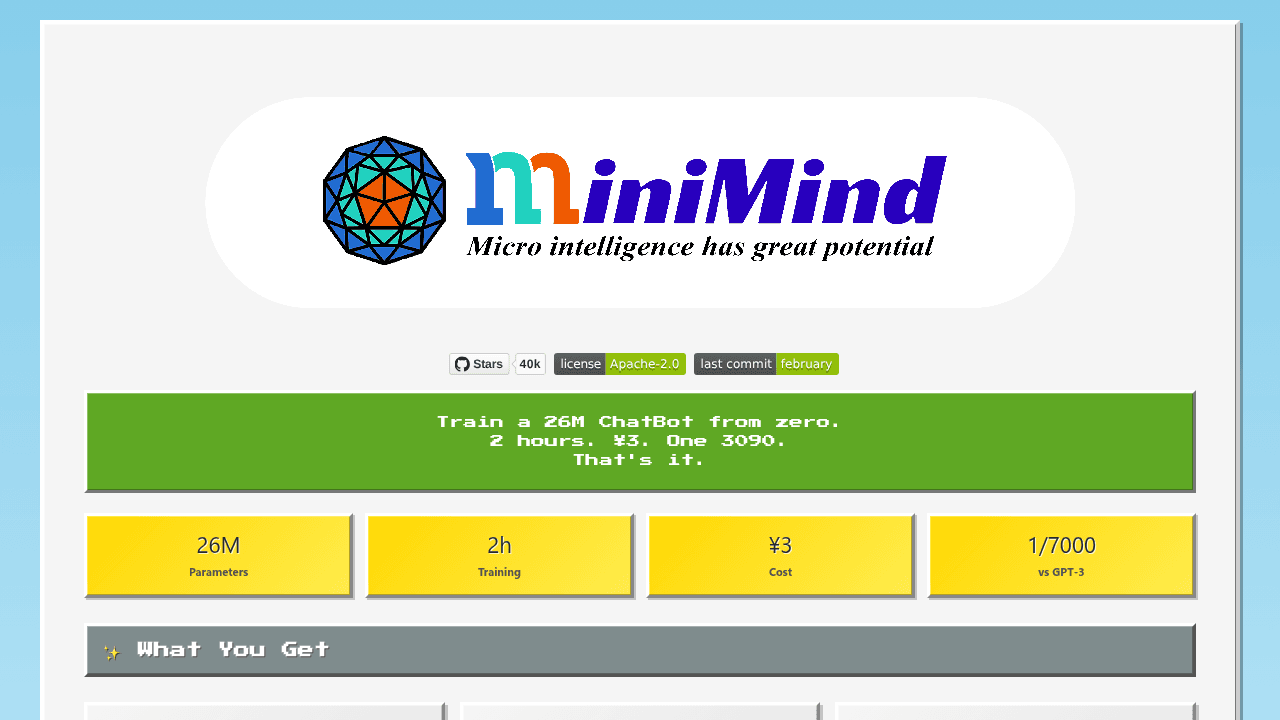

Train GPT on a Gaming PC: MiniMind's 2-Hour, $0.40 Pipeline

Training language models moved from data centers to gaming PCs. MiniMind provides the complete pipeline—pretraining, SFT, LoRA, DPO—optimized for single consumer GPUs. Students and researchers can now train GPT models in an afternoon for the cost of a coffee, understanding LLMs from first principles without institutional compute.

Training a language model from scratch has required data center budgets and institutional compute. MiniMind delivers a complete GPT training pipeline—pretraining through direct preference optimization—on a single consumer GPU in two hours for 3 RMB (roughly $0.40).

The 26M-parameter model runs on hardware most ML engineers already own. One 3090. An afternoon. No cloud bills, no multi-GPU orchestration, no waiting weeks for results. For students and researchers who wanted to understand language models from first principles but lacked institutional resources, this changes the entry requirements.

The Hardware Barrier That Kept LLM Training Institutional

LLM training has belonged to well-funded labs because of compute requirements. Standard GPT implementations assume multi-GPU setups, cloud infrastructure budgets in the thousands, and training windows measured in days or weeks. Individuals working on personal hardware faced a binary choice—watch from the sidelines or settle for inference-only experiments with pre-trained models.

The gap between wanting to learn and having access left a generation of engineers understanding transformers conceptually but never touching the actual training loop. Reading papers about pretraining, supervised fine-tuning, and reinforcement learning from human feedback doesn't compare to running those processes yourself and watching loss curves respond to hyperparameter changes.

What MiniMind Actually Delivers: The Complete Pipeline on One GPU

MiniMind tackles the resource constraint by scaling down. The architecture uses 26M parameters—small enough to train quickly on consumer hardware, large enough to demonstrate core LLM concepts. The full pipeline includes pretraining on text corpora, supervised fine-tuning for instruction following, LoRA for parameter-efficient adaptation, and DPO for alignment.

Two hours on a single 3090 gets you through the entire cycle. The cost calculation—3 RMB based on typical Chinese cloud GPU pricing—matters less than the proof point: training an LLM costs roughly what you'd spend on coffee.

The value lives in completeness. Unlike toy examples that stop at character-level prediction or partial implementations that skip the alignment phase, MiniMind walks through every stage a production model would encounter. You see how pretraining loss curves differ from fine-tuning dynamics, how LoRA reduces memory overhead, how DPO shapes model behavior differently than supervised learning.

How It Compares: nanoGPT, litgpt, and the Educational Tooling Space

The project joins a set of from-scratch implementations rather than competing with them. Karpathy's nanoGPT set the standard for clarity—readable code that teaches transformer internals through simplicity. Lightning AI's litgpt provides production-grade recipes for larger models on multi-GPU setups, optimizing for performance and scalability.

MiniMind occupies a different niche: extreme resource constraints with pipeline completeness. Where nanoGPT excels at explaining the architecture and litgpt targets serious training runs, MiniMind answers a specific question—what can you accomplish on ordinary hardware in an afternoon? The tools complement each other. Start with nanoGPT to understand the code, use MiniMind to run the full pipeline on your 3090, graduate to litgpt when you scale up.

The Rough Edges: Installation Failures and Training Instability

Setting realistic expectations: the project shows growing pains typical of actively developed educational tools. Some users report pip installation failures with numpy metadata generation on Windows. DPO training produces garbled outputs for some users despite unmodified code. VLM pretraining shows high loss values around 7.5 without clear resolution paths in the issues.

These aren't showstoppers for the target audience—engineers comfortable debugging dependency conflicts and interpreting training metrics—but they signal where the project is still hardening. Expect to troubleshoot rather than plug-and-play.

Who This Opens Doors For (And What It Still Can't Do)

MiniMind serves students learning LLM internals, researchers prototyping ideas before committing serious compute, and hobbyists who want to understand transformers beyond abstractions. If you own a 3090 and want hands-on experience with the full training pipeline, this makes it possible.

It won't produce production-grade models. It won't approach frontier capabilities. It assumes you're comfortable with Python environments and transformer concepts. But it answers the question thousands of engineers have asked: "Can I actually train an LLM on my hardware?" The answer, concretely and for the first time at this accessibility level, is yes.

jingyaogong/minimind

🧠「大模型」2小时完全从0训练64M的小参数LLM!Train a 64M-parameter LLM from scratch in just 2h!