Beads: AI Coding Agents That Remember Past 1 Hour

AI coding agents like Claude and Cursor hit a wall after about an hour—they forget context, lose track of dependencies, and waste tokens re-analyzing problems. Beads gives them a memory system using task graphs and topological sort, extending sessions to 12+ hours according to DoltHub's testing. We cover the technical approach, real-world results, and honest trade-offs including merge conflict issues.

Your AI coding agent forgets everything after an hour. It re-analyzes the same dependencies, loses track of what it already fixed, and burns tokens reconstructing context from scratch. DoltHub's team tested agents with and without structured memory: raw sessions maxed out at about an hour of productive work. With Beads, they hit 12 hours.

The 1-Hour Wall: Why AI Agents Lose Context

Claude, Cursor, and GitHub Copilot handle individual tasks well—refactor this function, write that test, debug a race condition. String those tasks across a multi-hour project, and they forget what they've done. Each new session starts cold. The agent re-reads files, re-infers dependencies, and sometimes undoes its own prior work because it has no structured memory of the task graph.

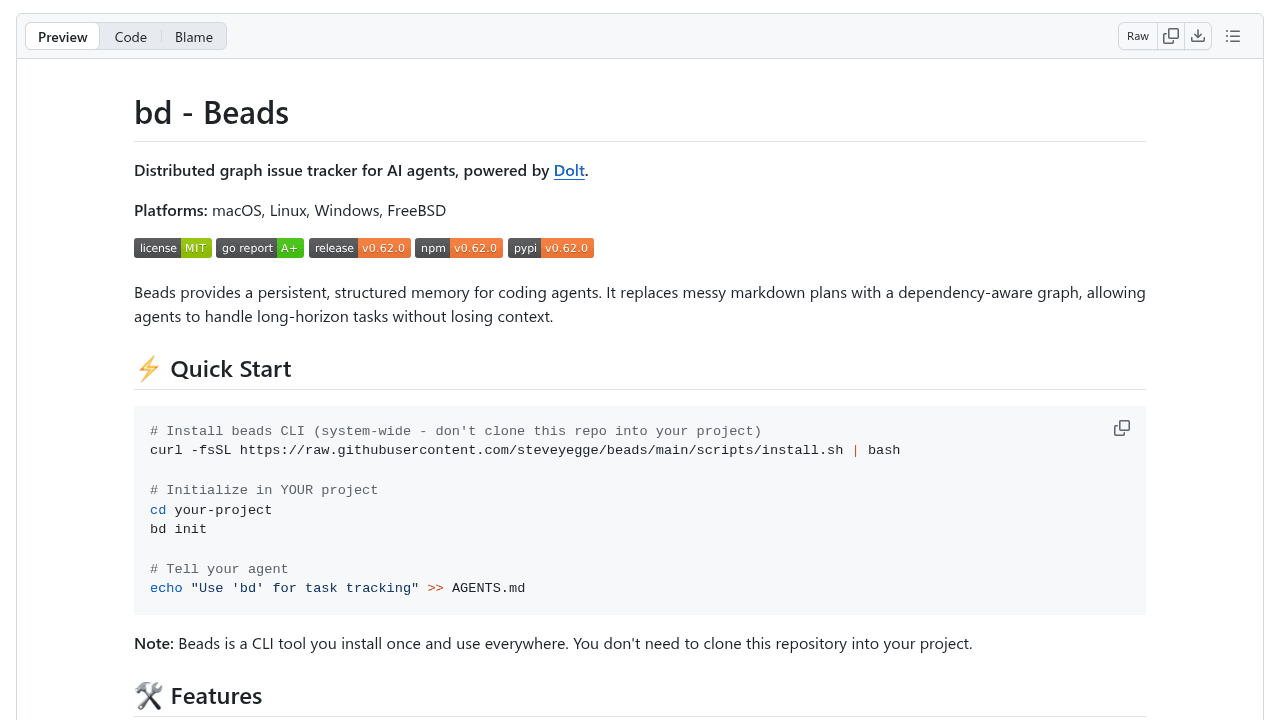

Beads addresses what its creator Steve Yegge calls the "50 First Dates" problem: agents waking up with no memory of yesterday's progress. Without a persistent task ledger, long-horizon work collapses into repetitive context reconstruction.

How Beads Works: Task Graphs Instead of Token Dumps

Beads stores issues as JSONL files in a .beads/ directory, version-controlled via Git. Each task tracks dependencies explicitly—"implement feature X blocks on refactoring module Y." When an agent asks for the next task, Beads runs a topological sort and returns only unblocked work.

This avoids context rot without forcing the LLM to analyze the entire dependency graph every time. Instead of dumping 500 tasks into a prompt and asking "what's ready?", the agent gets a pre-filtered list. It's the difference between giving a junior developer a sprawling Jira board versus handing them three tickets with clear dependencies already resolved.

SQLite caching handles query performance. Git branching supports parallel workstreams. The architecture is file-based—easy to inspect, diff, and debug when things break.

Real-World Testing: DoltHub's 12-Hour Sessions

DoltHub published concrete results: agents with Beads produced useful work for over 12 hours, versus the typical one-hour ceiling. That's the difference between completing a feature migration versus abandoning it halfway when the agent starts rewriting code it already fixed.

The improvement comes from offloading memory to structure. The agent doesn't need to "remember" that task 47 is blocked on task 12; the topological sort handles that. It can focus token budget on solving problems instead of reconstructing project state.

The Merge Conflict Problem (And Why It Matters)

A HackerNews user reported merge conflicts when multiple agents worked on Beads-managed tasks simultaneously. File-based storage makes this inevitable—two agents editing .beads/issues.jsonl at the same time will collide, just like any other shared file.

This is a trade-off, not a design flaw. File-based simplicity (inspect with cat, version with Git, edit manually when needed) competes with multi-agent coordination. Other approaches explore state-ledger systems for better concurrency, but add operational complexity. Beads optimizes for single-agent long-horizon work, where this trade-off makes sense.

Alternatives: Markdown Trackers and State Ledgers

One developer built a markdown-based task tracker as a faster, simpler alternative—evidence that some workflows don't need Beads' dependency tracking. Others prefer state-ledger designs for multi-agent environments. Different teams face different constraints.

Beads solves task planning and dependency tracking. Simpler markdown tools work for linear workflows. State ledgers handle concurrent agents. They're exploring different parts of the solution space.

Adoption Signal: 20+ Community Tools in Weeks

Steve Yegge built Beads in 6 days with Claude and released it in October 2025. By January 2026, the project had 19,883 GitHub stars and 20+ community integrations—web UIs like beads-ui, terminal viewers like bdui, editor extensions for VS Code and Neovim, even a Jira sync tool.

It's available as an npm package, Homebrew formula, and Go binary. Developers are building on it, which matters more than star counts.

Should You Use Beads?

If you're already pushing AI agents past one-hour sessions and hitting memory limits, Beads is worth testing. Best fit: single-agent work on complex codebases with clear dependency chains. Watch out for: multi-agent parallelism (merge conflicts incoming) and Windows compatibility (the project is working through 15 open issues as the platform matures).

The community tools help—web UIs reduce command-line friction, editor plugins surface task context inline. Start small: track dependencies for one feature, see if your agent's session length improves. DoltHub's results suggest it will.

steveyegge/beads

Beads - A memory upgrade for your coding agent