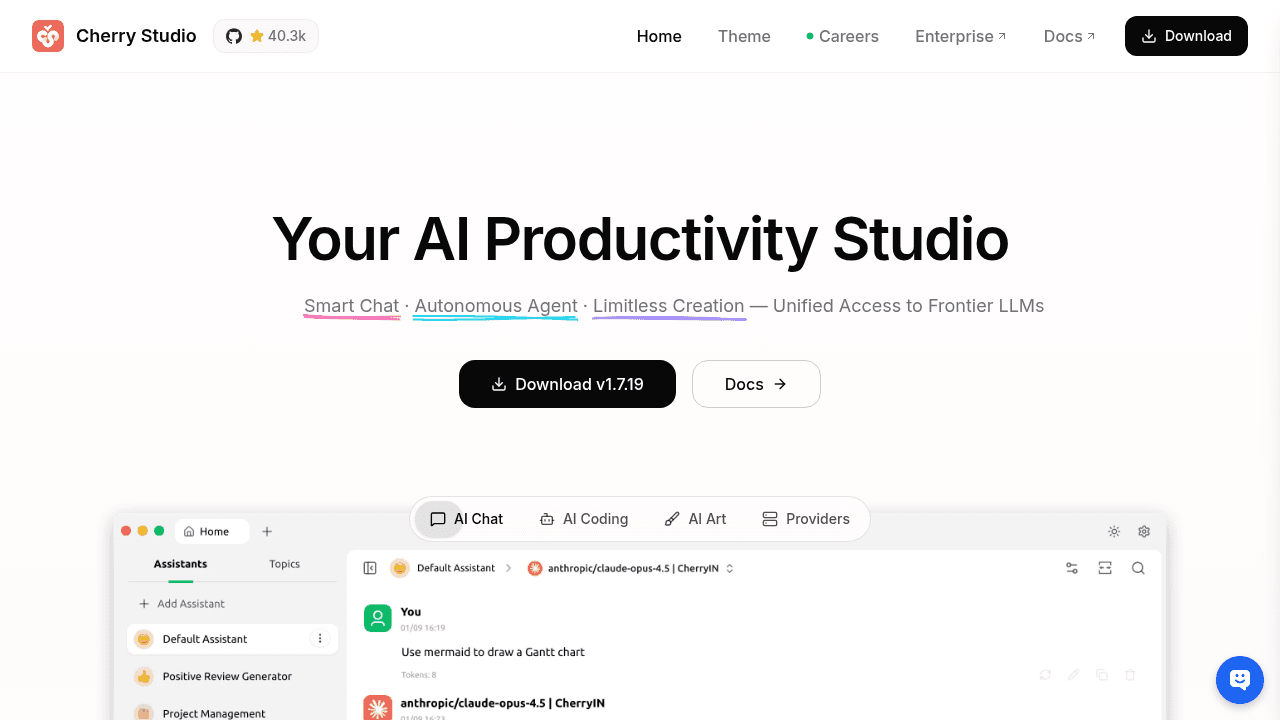

Cherry Studio: One Desktop App for All Your AI Models

Managing multiple AI providers means browser tab chaos and fragmented workflows. Cherry Studio consolidates cloud services, local models, and web interfaces into a single desktop client—with 300+ pre-configured assistants and MCP support. Recent 7.9x growth suggests this fragmentation problem resonates widely.

You've got ChatGPT open in one browser tab, Claude in another, a terminal window running Ollama locally, and somewhere in that mess is the Gemini interface you were comparing responses from twenty minutes ago. Your API keys are scattered across config files, conversation histories live in four different places, and switching between providers means reloading context every single time.

Cherry Studio consolidates that chaos into a single desktop client—cloud services like OpenAI and Anthropic, local models via Ollama and LM Studio, web interfaces for Claude and Perplexity, all accessible from one window. No more tab archaeology to find that conversation where GPT-4 gave you the better answer than Claude did.

The Multi-LLM Tax: Browser Tabs, API Keys, and Lost Context

Working across multiple LLM providers isn't exotic anymore—it's standard practice. Developers test prompts against different models to find which handles their use case best. Researchers compare outputs to understand model behavior. Teams need consistency when some members prefer Anthropic's reasoning while others default to OpenAI's speed.

The infrastructure hasn't caught up. Each provider maintains its own web interface with different UX patterns. API configurations live in separate dashboards. Conversation histories fragment across platforms. The overhead of managing this fragmentation compounds when you're doing it dozens of times per day.

Desktop clients solve workflow problems that web interfaces can't. Local storage for all conversations regardless of provider. Unified keyboard shortcuts. System-level integration. One authentication flow instead of five.

What Cherry Studio Actually Does

The core value proposition is straightforward: connect to any LLM provider through a single interface. OpenAI, Anthropic, Google's Gemini models, Azure OpenAI, and Moonshot API for cloud services. Ollama, LM Studio, and LocalAI for running models locally. Direct web access to Claude, ChatGPT, and Perplexity when you need their native features.

Conversations stay in one place regardless of which model or provider handled them. Switch from GPT-4 to Claude 3.5 Sonnet mid-thread without losing context. Compare responses from different models side-by-side without juggling browser windows.

The architecture supports this breadth without forcing you into a lowest-common-denominator interface. Provider-specific features remain accessible—you're not stuck with generic chat when a model offers specialized capabilities.

Beyond Basic Unification: Assistants, MCP, and Agentic Features

Cherry Studio extends beyond simple consolidation: 300+ pre-configured assistants for common workflows, eliminating the setup tax of prompt engineering for routine tasks. Model Context Protocol support lets models interact with external tools and data sources. Autonomous coding features handle repetitive development tasks. Private deployment options for teams that can't route queries through third-party infrastructure.

These additions address workflow friction points that emerge once you've solved the basic access problem. If you're already managing multiple providers, you're probably also dealing with repetitive prompt templates, context gathering from external sources, and standardization across team members.

Momentum and Growing Pains

The project reached 40,000+ GitHub stars with 7.9x growth in Q2 2025—validation that multi-LLM fragmentation resonates widely. 239 releases since launch signal the maintainers are shipping fast, though that pace creates occasional turbulence.

Recent 1.6.x versions have some bugs including GPT-5 parameter settings not working and custom parameter failures. The project is working through the challenges of maintaining compatibility across a dozen APIs with different conventions and update cycles. Hundreds of open issues reflect both high usage and the complexity of keeping up with fast-moving LLM providers.

Who This Solves Problems For

Cherry Studio makes sense when you're actively working across providers, not just occasionally trying alternatives. Developers A/B testing prompts across models benefit from unified conversation history. Research teams comparing model behavior need consistent interfaces for fair evaluation. Organizations standardizing on multi-LLM workflows gain deployment control.

If you're satisfied with a single provider's web interface, the desktop client adds complexity without solving problems you actually have. But if your browser tab bar looks like an LLM provider directory, consolidation delivers compound returns on the time you're already spending in these tools.

CherryHQ/cherry-studio

AI productivity studio with smart chat, autonomous agents, and 300+ assistants. Unified access to frontier LLMs